Teaching Quantum Systems to Remember

TL;DR: Quantum systems are powerful tools for predicting complex, time-dependent data — but measuring them destroys their memory. This paper introduces an architecture that solves that problem by feeding each measurement result back into the system in real time, creating a quantum machine learning model that is fast, memory-capable, and runs on today's quantum hardware.

The ability to predict how complex systems evolve over time is central to solving some of the most important challenges in science, finance, and engineering. This includes modelling the quantum dynamics of physical systems, anticipating the behaviour of financial markets, and monitoring industrial processes, among others. The value of accurate time-series forecasting is hard to overstate.

Classical computing has served this purpose well, but as the systems we use for modelling grow more complex and data volumes expand, new computational approaches are needed. New research introduces a scalable architecture that could meaningfully advance this frontier.

The new paper was produced by a collaborative effort by a team spanning researchers at QuantumBasel, the Research Institute CODE at the University of the Bundeswehr Munich, and the Center for Quantum Computing and Quantum Coherence (QC2) at the University of Basel.

The Promise, and the Problem, of Quantum Reservoir Computing

Reservoir computing is designed to excel at handling time-dependent data processing tasks (real-time market, weather, logistics data).

Classical reservoir computing, pioneered by Jaeger in a widely cited technical report, demonstrated that fixed, randomly connected networks of nodes could perform remarkably well on machine learning tasks involving sequences, without having to retrain the entire network from scratch every time.

Quantum Reservoir Computing (QRC) is the quantum evolution of this approach: replace the classical reservoir with a quantum system, and its naturally high-dimensional Hilbert space and complex, non-linear quantum dynamics carry out the crucial task of finding patterns in the data.

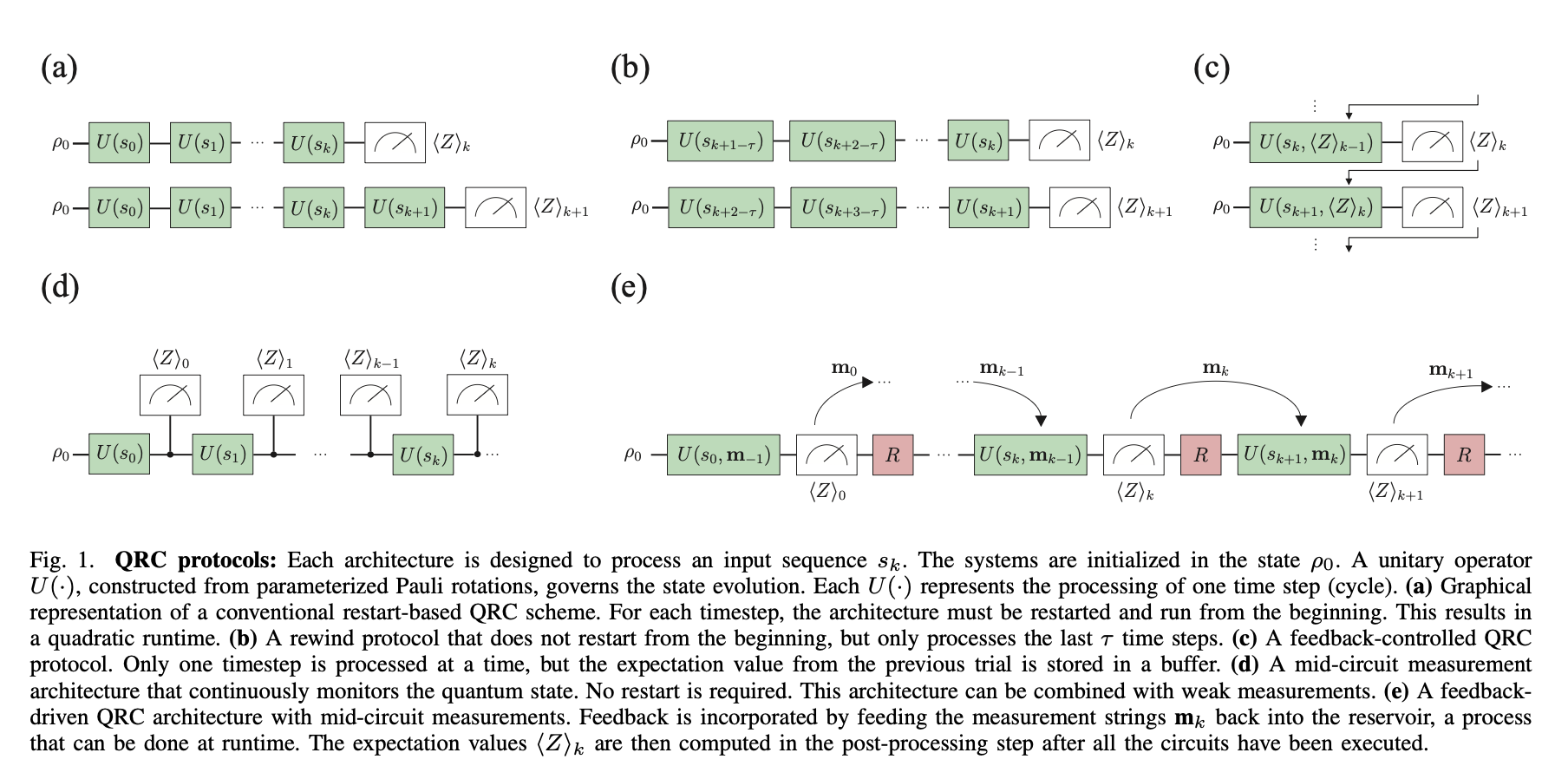

Researchers including Soriano, Giorgi, and Zambrini have already shown that QRC is a particularly useful and compelling framework for chaotic time series (a data sequence that’s close enough to random that it’s extremely hard to forecast without spotting its underlying, but difficult to perceive, rules) and quantum-native data processing tasks. In practice, however, QRC has faced a persistent architectural challenge. Existing algorithms divide into two camps, and each comes with a significant drawback:

1. Slow but smart

Restart-based protocols reinitialise the quantum circuit from scratch at every step. This allows for feedback connections, where the output of one step informs the input or encoding of the next, which are essential for retaining memory of past inputs. The cost is steep: shot overhead (time and hardware resources used) scales with training length, number of reservoir realisations, and shots per step, making this approach slow and resource-intensive on NISQ (Noisy Intermediate-Scale Quantum) devices.

2. Fast but forgetful

Continuous protocols use mid-circuit measurements to monitor the quantum state as it evolves without interruption. This is dramatically faster. However, the catch is that these methods have historically lacked structured feedback mechanisms; the readout of each time step is extracted and used for post-processing, but the information is not fed back into the next encoding step, meaning the system processes each data point without working contextual memory of what came before.

A landmark 2024 paper by Kobayashi, Fujii, and Yamamoto was the first to demonstrate that feedback connections could address this memory problem. This paper presented here picks up where that work left off, targeting the residual speed constraints that remained. Historically, the result has been a forced choice: speed, or memory, but not both. The new architecture from QuantumBasel and its collaborators is designed to eliminate that trade-off, however.

The Solution: Feedback During Coherence Time

The paper introduces a novel QRC scheme that integrates feedback connections into a continuous, mid-circuit measurement framework, operating entirely within the coherence time of the qubit.

The coherence time is the window during which a qubit maintains its quantum state before noise or environmental interference degrades it. Designing around this constraint rather than against it makes this architecture practically relevant for today's quantum technologies.

The mechanism works as follows: Input data is encoded into the quantum system at each cycle via quantum gates (tilting the qubit’s state by a specific angle, in a specific direction). More specifically, via two-qubit rotation gates that inject both the current input value and the feedback signal simultaneously.

A mid-circuit measurement then captures the observables of the system at that moment. Critically, the raw measurement strings from the previous cycle, and not just the processed expectation values, are immediately fed back in as classical inputs into the gate rotations governing the next cycle. This is the classical feedforward step: the system carries a structured memory of what just happened directly into its next computation.

The linear readout layer, a standard linear regression model trained on the reservoir's observable outputs, remains unchanged from classical reservoir computing convention. What is novel is that the dataset of reservoir states used to train this readout now contains the influence of feedback, giving the linear model richer, more memory-laden features to work with.

To isolate this feedback effect rigorously, the researchers perform a deliberate qubit reset after each measurement. This ensures no residual quantum information bleeds forward through the quantum state; any memory the system demonstrates comes from the classical feedback mechanism alone, not from unintended quantum effect carry-over. This methodology allows for a clean, interpretable claim about what the feedback connections are actually contributing.

The architecture was implemented on IBM's ibm_marrakesh superconducting quantum processor, which supports the real-time classical feedforward operations the scheme requires. This capability distinguishes modern dynamic quantum circuits from earlier, more rigid gate models. This is not a theoretical proposal awaiting future hardware, but rather one that runs on quantum devices available today.

What the Experiments Show

The team evaluated their novel architecture across three dimensions:

Memory retention

Predictive capability on chaotic time series

Hardware performance.

All simulation results were averaged over 128 Haar-random unitaries (the random mixing operation at the heart of the Quantum reservoir) to ensure robustness, with shot-based sampling used throughout to mirror the statistical noise profile of real quantum hardware.

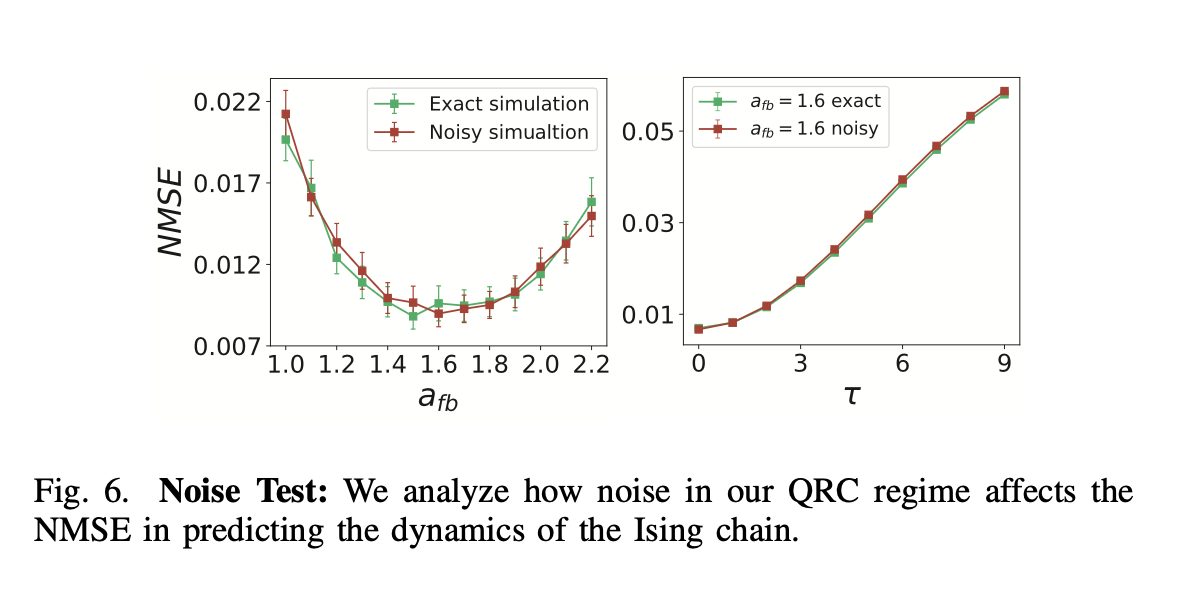

Memory capacity was assessed using the standard short-term memory benchmark, where the model attempts to recall values from a dataset of past inputs. Results confirmed non-zero correlation coefficients at a delay of τ = −1. This constitutes direct evidence that feedback connections are functioning as intended during continuous operation. Performance scaled upward with the number of measurement shots, and was sensitive to the feedback strength parameter: the optimal range sat between values of 1.0 and approximately 2.0, with memory capacity and predictive performance both degrading outside this window.

Predictive performance was assessed against two well-established benchmark datasets. The first was the Mackey-Glass time series; a classical chaotic time series governed by a delay differential equation, extensively used in the classical reservoir computing literature and cited in works appearing in Phys. Rev. Lett.

The Mackey-Glass time series is a long-established benchmark in reservoir computing research and could be somewhat viewed as a kind of ‘standardized exam’. The second was the expectation value of a spin in a one-dimensional chaotic quantum Ising chain; a quantum-native time series. In other words, a simulated quantum system in which a row of five quantum magnets evolve chaotically over time, where the task was to predict how one specific magnet in the chain would behave next.

The model achieved a minimum average Normalised Mean Squared Error (NMSE) of 5.07 × 10⁻³ on the Ising chain and 1.4 × 10⁻² on the Mackey-Glass sequence. The two-qubit system refers to the architecture itself; the Ising chain and Mackey-Glass are the benchmark datasets it was tested against, not the hardware configuration.

For a system this small, just two qubits operating under noisy conditions, these are strong results, achieved with a simple calculation at the output stage. Hardware validation on IBM quantum hardware confirmed that the architecture translates from simulated environments to real quantum devices. The QPU results were limited by hardware constraints, not by the architecture itself. The key takeaway is simply that it ran, and it worked.

Finally, a comparison of four reservoir computing architectures, including a classical ESN and the prior feedback-driven QRC from Kobayashi et al., found that all models exhibited the Echo State Property to some degree. The proposed architecture showed a milder form than the others, meaning its initial conditions faded more slowly over time, but the direction of travel was the same. The authors are transparent about this and flag it as an area for future work.

Why This Matters

The significance of this work extends beyond any immediate technical results. The research addresses a structural problem that has limited the practical deployment of quantum machine learning: the inability to combine speed with memory in a single, hardware-compatible architecture.

By demonstrating that feedback connections can operate effectively within the continuous, fast-execution framework enabled by mid-circuit measurements, the research opens a credible path toward QRC systems that are both efficient and capable of handling the kind of complex, time-dependent data processing that real-world applications demand. This is relevant not only for academic benchmarks, but also for any domain where large-scale temporal datasets must be processed quickly and with contextual awareness.

The architecture's ability to handle both classical chaotic time series (Mackey-Glass) and quantum chaotic systems (the Ising chain) within a single unified framework is an early indicator of this breadth and potential applicability. Quantum extreme learning machines and neuromorphic computing architectures face the same fundamental tension between speed and capability. The principles demonstrated here may offer a useful template for both. Photonic quantum systems, an alternative hardware platform for reservoir computing explored in related research, are another area where this approach could prove valuable.

There is also a hardware alignment argument that should not be underestimated. This architecture is explicitly designed around the quantum features of today's superconducting processors, specifically IBM's support for real-time classical feedforward and dynamic quantum circuits. Research that runs on existing quantum devices, rather than the hardware of a decade from now, is the kind of research that is more likely to create tangible, near-term quantum utility.

What Comes Next

The researchers identify several promising directions for future work. Scaling the architecture beyond the minimal two-qubit system used in these experiments is the most immediate open question, and early results suggest this is a direction worth pursuing, with the model's performance under depolarising noise indicating robustness that should persist at larger scales.

Several avenues remain open for future investigation. Adjusting or removing the reset operations could allow quantum correlations to persist alongside the classical feedback, potentially unlocking richer memory structures. Combining the approach with weak measurements, which extract partial information without fully collapsing the quantum state, could extend memory capacity further still and bring the architecture closer to the more powerful processing regimes explored in related quantum algorithms.

This research represents a well-grounded step toward quantum machine learning architectures that are fast, memory-capable, and executable on near-term hardware. Though the foundational trade-off in QRC has not been fully dissolved, it has been constructively challenged to the point of genuine progress.

Read the full research paper here. To learn more about QuantumBasel's research programmes or to explore collaboration opportunities, get in touch with the QuantumBasel team.

Frequently Asked Questions

Ready to Explore Quantum & AI Possibilities?

Connect with our team of Quantum & AI experts to discover how QuantumBasel can help solve your most complex challenges.